Robots.txt Generator For WordPress | Best robots.txt generator

Concerned about your online content which don’t want to be index on the search engine, being index on the search engines? Well, robots.txt generator is a handy tool and it is quite impressive if the search engines visits and index your website. But, in some cases some wrong data is indexed on the search engine which you don’t want people to see.

Consider you have made a special data for people who have subscribed to your site, but due to some errors that data is accessible to regular people as well. And sometimes your confidential data which you don’t want anyone to see is made visible to many people. In order to overcome this issue you have to inform the websites about some specific files and folders to be kept safe using the metatag. But most of the search engines do not read all of the metatags, so to be double sure you have to use the robots.txt file.

Robots.txt is a text file which tells the search robots which pages should be kept confidential and not to be viewed by other people. It is a text file so don’t compare it with an html one. Robots.txt is sometimes misunderstood as a firewall or any other password protection function. Robots.txt ensures that the necessary data which the web owner wants to keep confidential is kept away. One of the frequently asked questions regarding robots.txt file is, how to create a robots.txt file for SEO? In this article we will be answering you this question.

The Robots.txt has a proper format which should be kept in mind. If any mistake in the format is made, the search robots won’t perform any task. Below is a format for a robots.txt file:

User-agent: [user-agent name]

Disallow: [URL string not to be crawled]

Just keep in mind that the file should be made in text format.

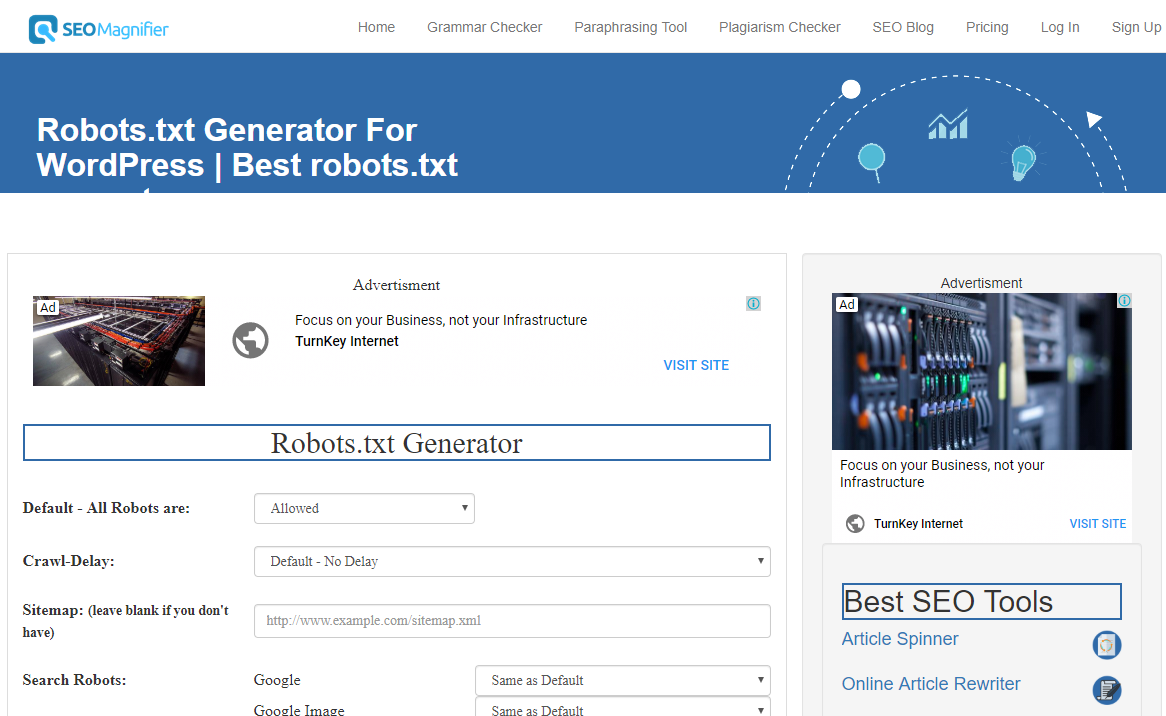

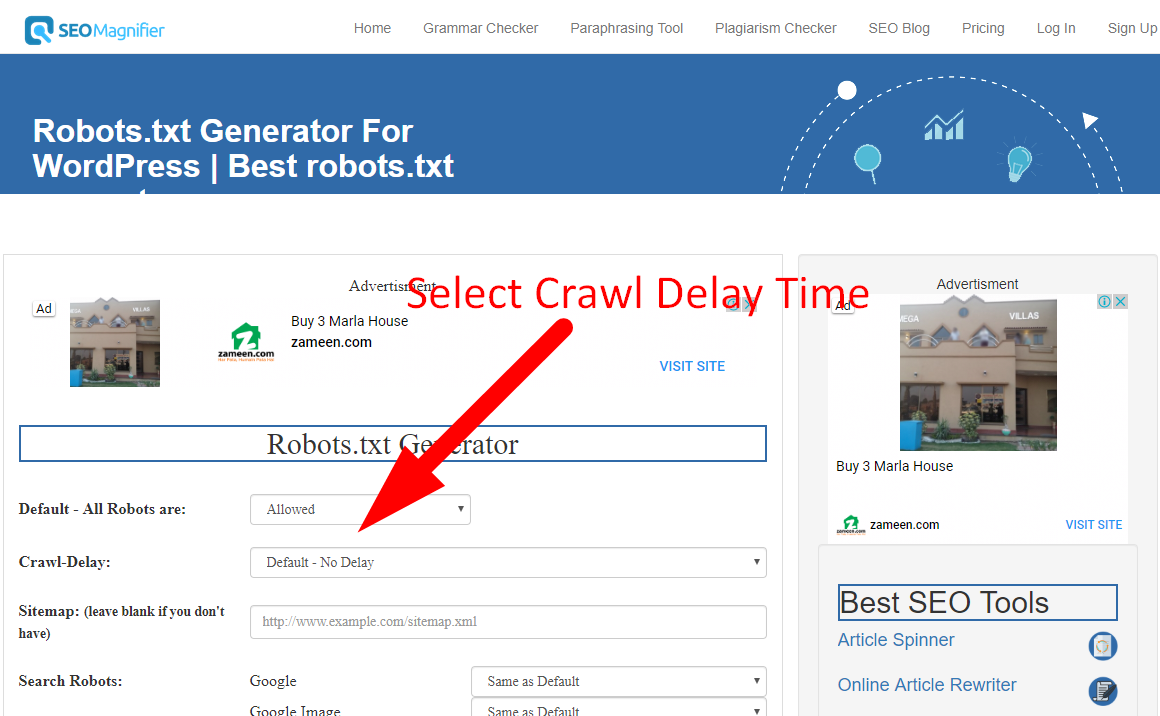

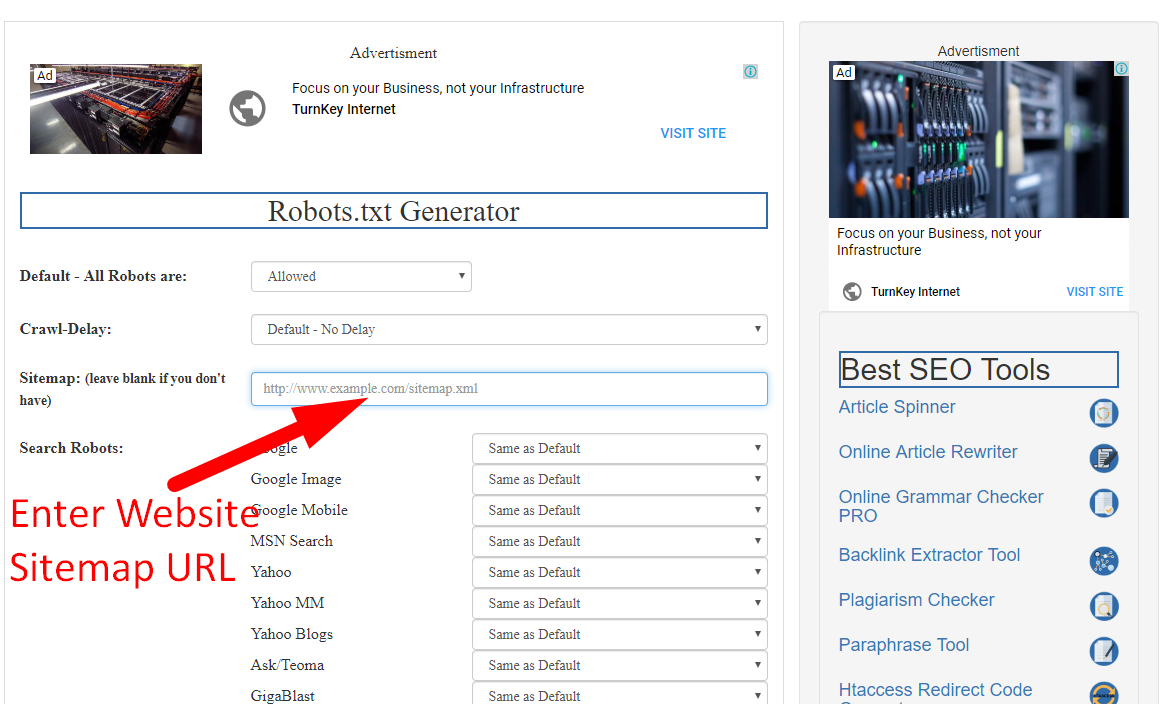

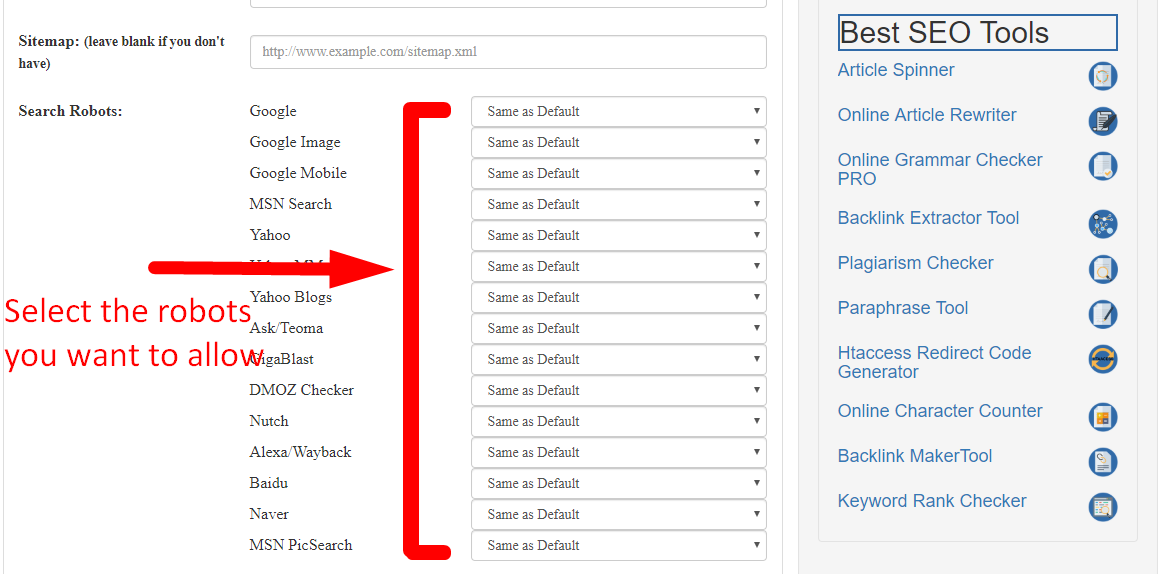

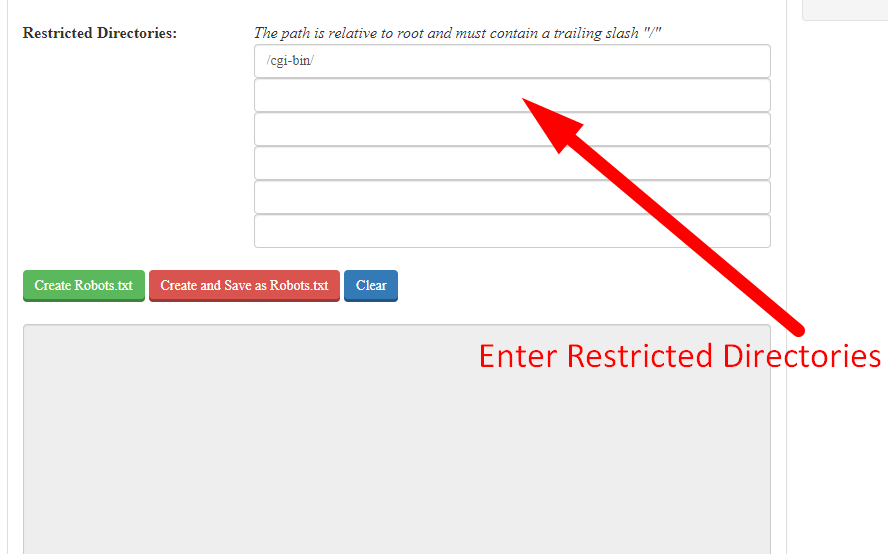

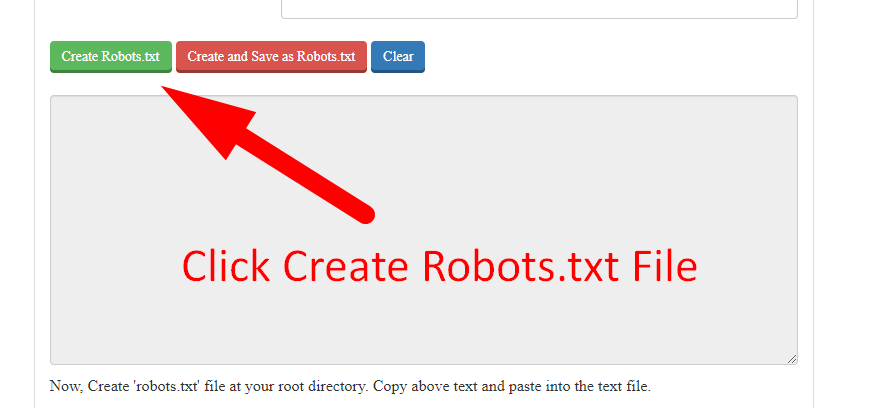

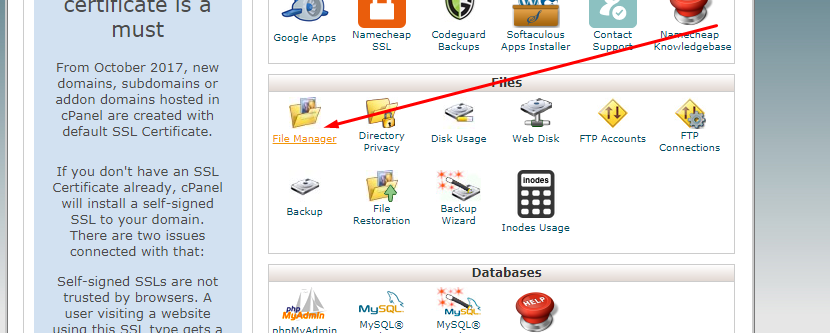

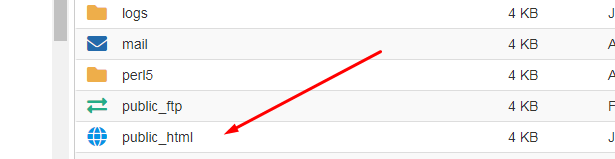

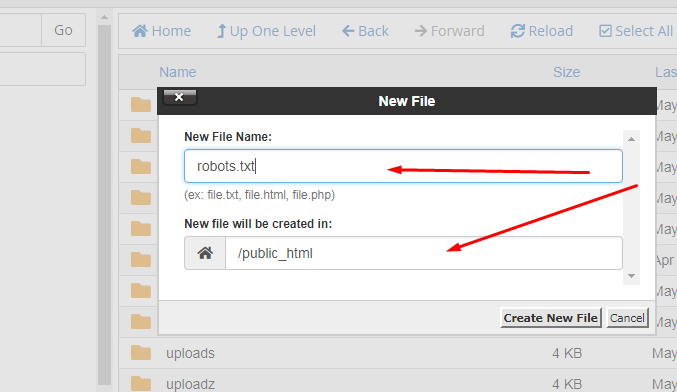

Custom robots.txt generator for blogger is a tool which helps in the webmasters to protect their websites’ confidential data to be indexed in the search engines. In other words it helps in generating the robots.txt file. It has made the lives of websites owner quite easy as they don’t have to create the whole robots.txt file by their own self. They can easily make the file by using the below steps:

By using these easy steps you can easily create a robots.txt file for your website.

If you already have a robots.txt file then in order to maintain proper security of your files you have to create a proper optimized robots.txt file with no errors. The Robots.txt file should be properly examined. For a robots.txt file to be optimized for search engines you have to clearly decide what should come with the allow tag and what should come with a disallow tag. Image folder, Content folder, etc. should come with the Allow tag if you want to your data to be accessed by search engines and other people. And for the Disallow tag should come with folders like, Duplicate webpages, Duplicate content, duplicate folders, archive folders, etc.

Although it is not required to create a Robots.txt file in WordPress. But in order to achieve higher SEO you are required to create a robots.txt file so that the standards are maintained. You can easily create a WordPress robots.txt file to disallow search engines to access some of your data by following the steps below:

User-agent: *

Disallow: /admin/

Disallow: /admin/*?*

Disallow: /admin/*?

Disallow: /blog/*?*

Disallow: /blog/*?

If you have a sitemap, add its URL as:

“sitemap: http://www.yoursite.com/sitemap.xml”

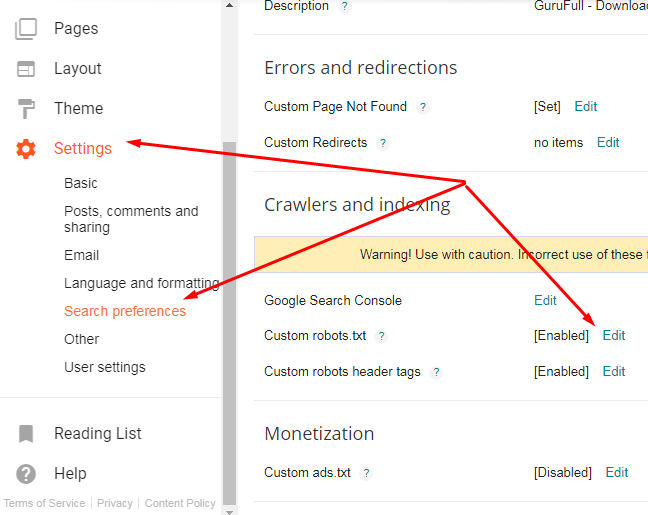

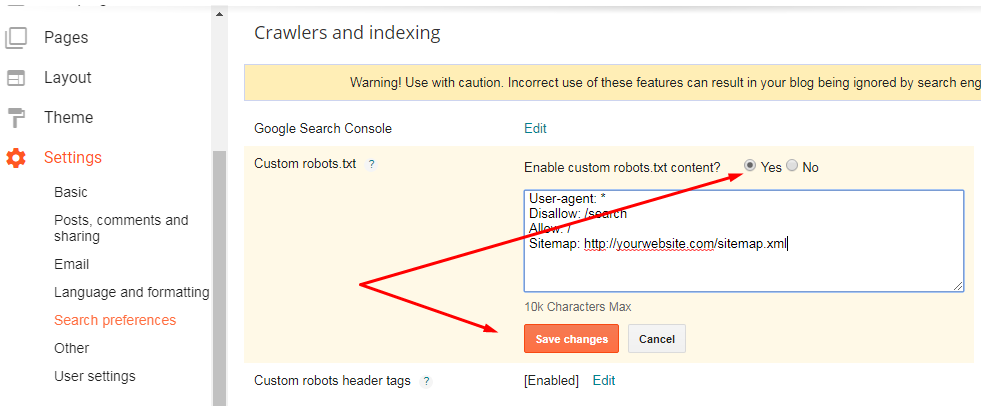

Since blogger has a robots.txt file in its system, therefore, you don’t have to hassle so much about it. But, some of its functions are not enough. For this you can easily alter the robots.txt file in blogger according to your needs by following the below steps:

4. From this go to the “Custom robots.txt” tab and click on edit and then “Yes”.

5. After that paste your Robots.txt file there to add more restrictions to the blog. You can also use a custom robots.txt blogger generator.

6. Then save the setting and you are done.

Following are some robots.txt templates:

User-agent: *

Disallow:

OR

User-agent: *

Allow: /

User-agent: *

Disallow: /

User-agent: *

Disallow: /folder/

There is no rocket science behind using SEO magnifier robots file creation tool. Simply Follow these steps for generating your file.